What is Artificial Intelligence?

A recent Financial Conduct Authority (FCA) discussion paper, DP22/4: Artificial Intelligence, offered the following definition of Artificial Intelligence (AI):

‘It is generally accepted that AI is the simulation of human intelligence by machines, including the use of computer systems, which have the ability to perform tasks that demonstrate learning, decision-making, problem solving, and other tasks which previously required human intelligence. Machine learning is a sub-branch of AI.

AI, a branch of computer science, is complex and evolving in terms of its precise definition. It is broadly seen as part of a spectrum of computational and mathematical methodologies that include innovative data analytics and data modelling techniques.’

- WHAT DO FIRMS NEED TO CONSIDER?

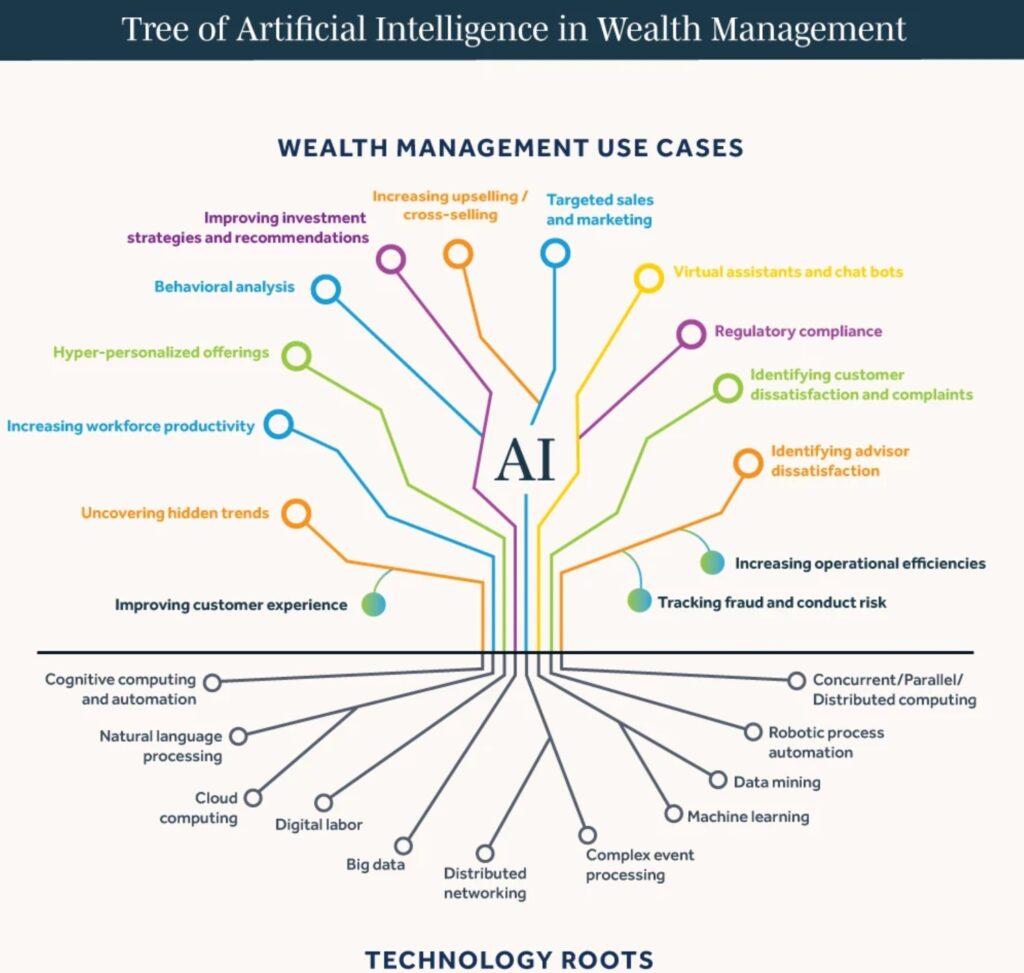

Many PIMFA members are already using AI systems and tools in their everyday operations, and it is likely that the adoption of AI in financial services will increase rapidly over the next few years.

PIMFA firms already use AI tools for several purposes. For example, they analyse large volumes of data quickly, easily, and accurately, which enables their employees to spend more time working with and for their clients.

There are concerns that as AI becomes more advanced, it could introduce new risks, for example:

system develops biases in its decision making

leading firms to make bad decisions

rESULTING IN Poor outcomes for their clients

This is why it is essential that firms deploying AI systems have a suitably robust control framework around their AI components to keep a careful check on what they are doing.

As with any innovation, AI has the potential to make fundamental and far ranging improvements in how firms can serve their clients. However, we must ensure it is continually monitored and checked regularly to manage the risk and maximise the benefit.

- What are regulators doing?

A number of government departments are asking regulators such as the Financial Conduct Authority (FCA), Bank of England (BoE), Information Commissioners Office (ICO) and Competition and Markets Authority (CMA) to publish an update on their strategic approach to AI and the steps they are taking according to the White Paper. The Secretary of State is asking for this update by 30 April 2024.

On 13 March 2024, the EU Parliament approved the EU Artificial Intelligence Act. The EU AI Act sets out a comprehensive legal framework governing AI, establishing EU-wide rules on data quality, transparency, human oversight and accountability. It features some challenging requirements, has a broad extraterritorial effect and potentially huge fines for non-compliance.

Maria Fritzsche

Senior Policy Adviser - Operational Policy, Regulation and Innovation Lead

Click to expand.

latest news

New PIMFA Podcast: Beyond the hype: what a real human-AI operating model looks like

On the latest PIMFA podcast, Richard Doherty, Publicis Sapient and Richard Preece, DA Resilience argue that bolting AI onto existing workflows isn’t transformation, it’s window dressing. The real work is redesigning how humans and machines share the work itself, repeatable, data-heavy tasks to AI; high-risk, trust-critical calls to humans; everything else human-in-the-loop.

Frontier AI and Cyber Resilience: Bank of England, FCA and HM Treasury Joint Statement

The Bank of England (BoE), FCA and HM Treasury (HMT) have issued a joint statement on the cyber resilience implications of frontier AI for regulated firms and financial market infrastructures.

The cyber capabilities of current frontier AI models are already exceeding what a skilled practitioner could achieve, at significantly higher speed, greater scale, and lower cost. These capabilities, if used maliciously, amplify cyber threats to firms’ safety and soundness, customers, market integrity, and financial stability. Firms that have underinvested in core cyber security fundamentals are likely to become progressively more exposed as more advanced models emerge.

The authorities identify five domains for firm action: governance and strategy; vulnerability management; managing third-party risks; protection; and response and recovery, with reference to the effective practices on cyber resilience published by the Bank, PRA and FCA in October 2025.

Read the full statement here.

Don’t be Complacent About AI Compliance

Read here an article from the PIMFA Journal #33 by Vicky Pearce at B-Compliant about how to use AI safely and compliantly.

AI Preparedness and Financial Services Growth: Financial Services Skills Commission Report

The Financial Services Skills Commission (FSSC) has published a new HMT-commissioned report, A Workforce Transformed, examining the impact of AI and other disruptive technologies on the financial services workforce.

The report finds that up to 50% of tasks in most financial services roles could be automated, and that the sector will need to recruit and train 450,000 highly skilled people over the next ten years. While the opportunities for productivity and growth are significant, it warns that failure to address skills gaps at the scale of the challenge poses a serious threat to economic growth. A second phase of research focused on practical recommendations for firms, government and the wider skills system is expected by early 2027.

The FSSC is also working with HMT and Skills England on a Skills Compact, a commitment by signatory firms to work together to close skills gaps in UK financial services, due to launch in summer 2026.

Read the full report here.

Those wishing to hear more can join the FSSC’s webinar on Thursday 4 June, where the key findings will be discussed. Register here.

The Wealth and Asset Management Operating Model Can’t Keep Up

Read here an article from PIMFA journal #33 by Richard Doherty and Sumit Johri at Publicis Sapient about the traditional playbook built on manual processes, siloed business and technology functions, and relationship-driven models is no longer sufficient.

Talent and Controls – Where AI Initiatives Succeed or Stall

Read here an article from PIMFA journal #33 by Edward Russell at Solve about how talent and controls aid successful AI adoption.

Leading Lights Forum Report 2025/26

AI: Evolution, Revolution or Devastation? Read the new Leading Lights Report

PIMFA

PIMFA